In the pantheon of classic gaming hardware, certain chips have achieved legendary status. The MOS Technology SID chip gave the Commodore 64 its unmistakable sound. The Ricoh 2A03 gave the NES its characteristic pulse. But there is another chip, less famous to the general public but absolutely essential to the developers who worked with it, that changed what was possible in home gaming: the blitter.

Short for “BLT”—block transfer—the blitter was a specialized coprocessor designed to move blocks of graphics data around memory faster than the main CPU could manage. It was the secret weapon behind the Amiga’s graphical prowess, and its influence can be seen in every graphics chip that followed. To understand the blitter is to understand how developers squeezed every drop of performance from the hardware of the 1980s and 1990s.

The Problem: Moving Pixels with a CPU

To appreciate why the blitter was revolutionary, you first have to understand the problem it solved. In the early days of home computers and consoles, the CPU was responsible for everything. It ran the game logic, handled input, processed sound, and—most importantly—moved graphics data around.

Moving pixels was a computationally expensive task. To draw a sprite on the screen, the CPU had to read the sprite’s image data from memory, read the background data where the sprite would be placed, combine the two (accounting for transparency), and write the result back to video memory. For a single sprite, this was manageable. For a screen full of moving objects, it became a bottleneck that limited what developers could achieve.

The problem was compounded by the fact that early CPUs were not particularly fast. The 7 MHz 68000 in the Amiga was powerful for its time, but asking it to manage a full screen of moving graphics while also running game logic was a tall order. Something had to give.

The Solution: A Dedicated Graphics Coprocessor

The blitter was designed to offload the most demanding graphics tasks from the CPU. It was a dedicated chip that did one thing and did it exceptionally well: moving blocks of memory from one location to another, with optional logic operations applied during the move.

The blitter operated independently of the CPU. Once the CPU set up the blitter’s registers with source and destination addresses, the size of the block to move, and the operation to perform, the blitter took over. It worked in parallel with the CPU, handling the graphics transfer while the CPU continued running game logic. This parallel processing was the key to the Amiga’s graphical capabilities.

How the Blitter Worked

The blitter was fundamentally a DMA (direct memory access) engine. It could read from up to three source memory locations and write to one destination, all in a single pass. The three sources allowed for sophisticated operations: one source could hold the sprite image, another could hold a mask defining transparent areas, and a third could hold the background. The blitter would combine them according to a set of logic operations, producing the final image in the destination.

This was all done at the hardware level, with no CPU intervention once the operation was started. A complex graphics operation that might have taken hundreds of CPU cycles could be completed by the blitter in the time it took to transfer the data, with the CPU free to work on other tasks.

The Blitter’s Operations

The blitter supported a range of logic operations, known as minterms, that determined how the source data was combined. These operations allowed for:

-

Simple copying of data from one location to another

-

Transparent sprite drawing using a mask

-

Line drawing and area filling

-

Boolean operations like AND, OR, and XOR for combining graphics

Developers could set up complex chains of blitter operations to achieve effects that would have been impractical with the CPU alone.

The Amiga Blitter: A Case Study

The Amiga’s blitter was part of the custom chipset that also included Agnus (the memory controller), Denise (the video output), and Paula (the sound and I/O). Together, these chips made the Amiga a graphics powerhouse that outpaced its competitors for years.

The Amiga blitter operated in parallel with the CPU and the copper—another coprocessor that could execute simple programs in sync with the video display. This triple-threat approach allowed developers to achieve effects that seemed impossible on other hardware.

A typical game loop might work like this: the copper would wait for the vertical blank—the brief moment when the display was not being drawn—and trigger the blitter to start copying the next frame’s graphics to video memory. While the blitter worked, the CPU would run game logic, update object positions, and set up the blitter registers for the next frame. By the time the display was ready to draw again, the new frame was already in place.

This coordination between CPU, blitter, and copper was the hallmark of skilled Amiga programming. Developers who mastered these chips could create games with smooth scrolling, dozens of moving objects, and sophisticated visual effects that rivaled arcade machines.

Beyond the Amiga: The Blitter Legacy

The blitter was not unique to the Amiga. Similar concepts appeared in other systems, though none integrated the concept as thoroughly.

The Atari ST, the Amiga’s chief competitor, did not have a blitter in its initial release. Later models added a blitter chip, but by then the Amiga had already established its graphical superiority. The Atari ST’s lack of a blitter was one of the key reasons Amiga games often looked and moved better than their ST counterparts.

The Sega Genesis had a blitter-like capability built into its Video Display Processor. While not as flexible as the Amiga’s blitter, it could handle sprite scaling, rotation, and other operations that were essential for arcade-style games.

The Commodore 64 had a sprite system that included some DMA capabilities, though it was not a general-purpose blitter in the Amiga sense. Its sprites were hardware-accelerated, allowing for smooth movement without CPU involvement.

The concept of a dedicated graphics coprocessor evolved into the graphics processing units of today. Modern GPUs are blitters on an unimaginable scale—massively parallel processors designed to move and transform millions of pixels per second. Every time your modern computer draws a window, scrolls a webpage, or plays a game, it is using technology that traces its lineage back to the blitter.

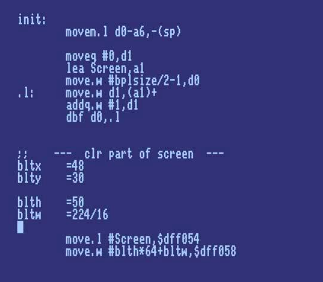

Programming the Blitter

Writing code for the blitter was a specialized skill that required deep understanding of the hardware. A typical blitter routine involved:

-

Setting up the blitter’s control registers with source and destination addresses

-

Configuring the operation to perform

-

Specifying the size of the block to transfer

-

Starting the blitter and waiting for completion, or continuing CPU work in parallel

Developers had to be careful about blitter contention. The blitter shared memory access with the CPU and the display hardware. If multiple components tried to access memory simultaneously, performance could suffer. Skilled programmers learned to schedule blitter operations during periods of low display activity, such as the vertical blank interval.

One common technique was to use the copper to trigger blitter operations automatically, creating complex effects without any CPU involvement. The copper could wait for a specific scanline, then start the blitter, creating effects like split-screen displays, animated color gradients, and real-time graphics transformations.

The End of an Era

As CPU speeds increased and graphics hardware became more sophisticated, the need for a separate blitter chip diminished. The 68000 in the Amiga was replaced by faster processors that could handle graphics transfers without dedicated help. The blitter concept was absorbed into more general-purpose graphics hardware.

But the legacy of the blitter lives on. The idea of offloading repetitive graphics tasks to dedicated hardware is fundamental to modern computing. Every GPU, every graphics accelerator, every video card is a descendant of the blitter concept. The efficiency gained by parallel processing—doing graphics work separately from CPU work—is as relevant today as it was in 1985.

Why the Blitter Matters

The blitter mattered because it enabled a generation of games that would not have been possible otherwise. Without the blitter, games like Shadow of the Beast with its multi-layered parallax scrolling, Another World with its cinematic rotoscoped animation, and Speedball 2 with its smooth, fast-paced action would have been impossible on the hardware of the time.

But the blitter mattered for another reason as well. It taught developers that hardware could be designed to solve specific problems efficiently. It showed that the right architecture could multiply the capabilities of a modest CPU. And it inspired a generation of programmers to think about parallelism, about offloading work to dedicated units, about the art of making hardware and software work together.

For those who programmed the blitter, it was more than a chip. It was a collaborator. It was the part of the machine that turned your code into something that moved, that lived, that played. And for the players who enjoyed the games built with it, the blitter was the invisible hand that made the magic happen.

Today, when you play a modern game with thousands of objects on screen, each rendered with realistic lighting and physics, you are seeing the distant descendant of the blitter. The scale has changed beyond recognition, but the principle remains: let dedicated hardware do what it does best, so the programmer can focus on making the game come alive.

![]()